Turn Scattered AI Concerns Into Structured Risk Records

Most AI risk is outlined in conversations or spreadsheets, making prioritization unclear and accountability harder than it needs to be. The AI Risk Register offers a single place to document what could go wrong, understand potential impact, and track how each risk is managed. See how a structured register takes shape in practice for free.

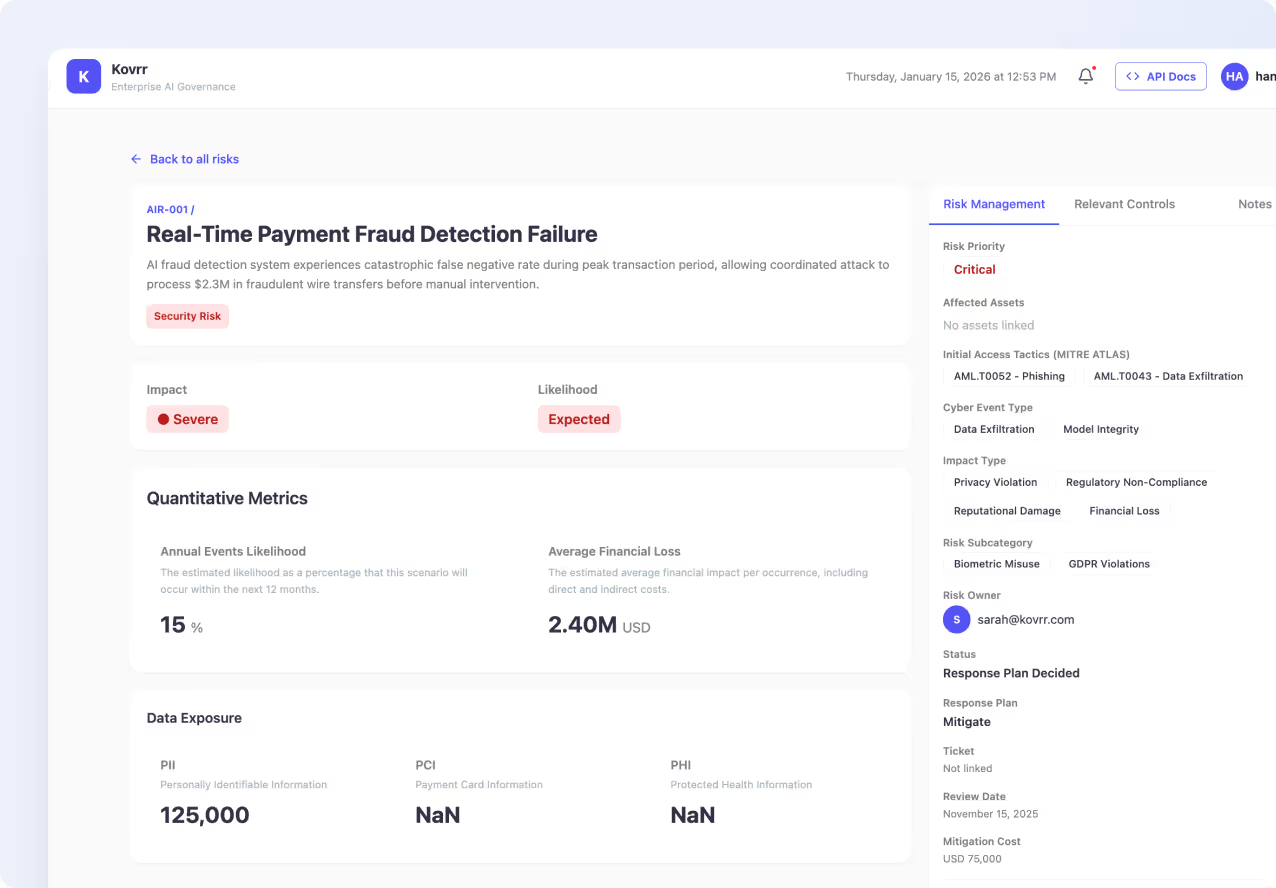

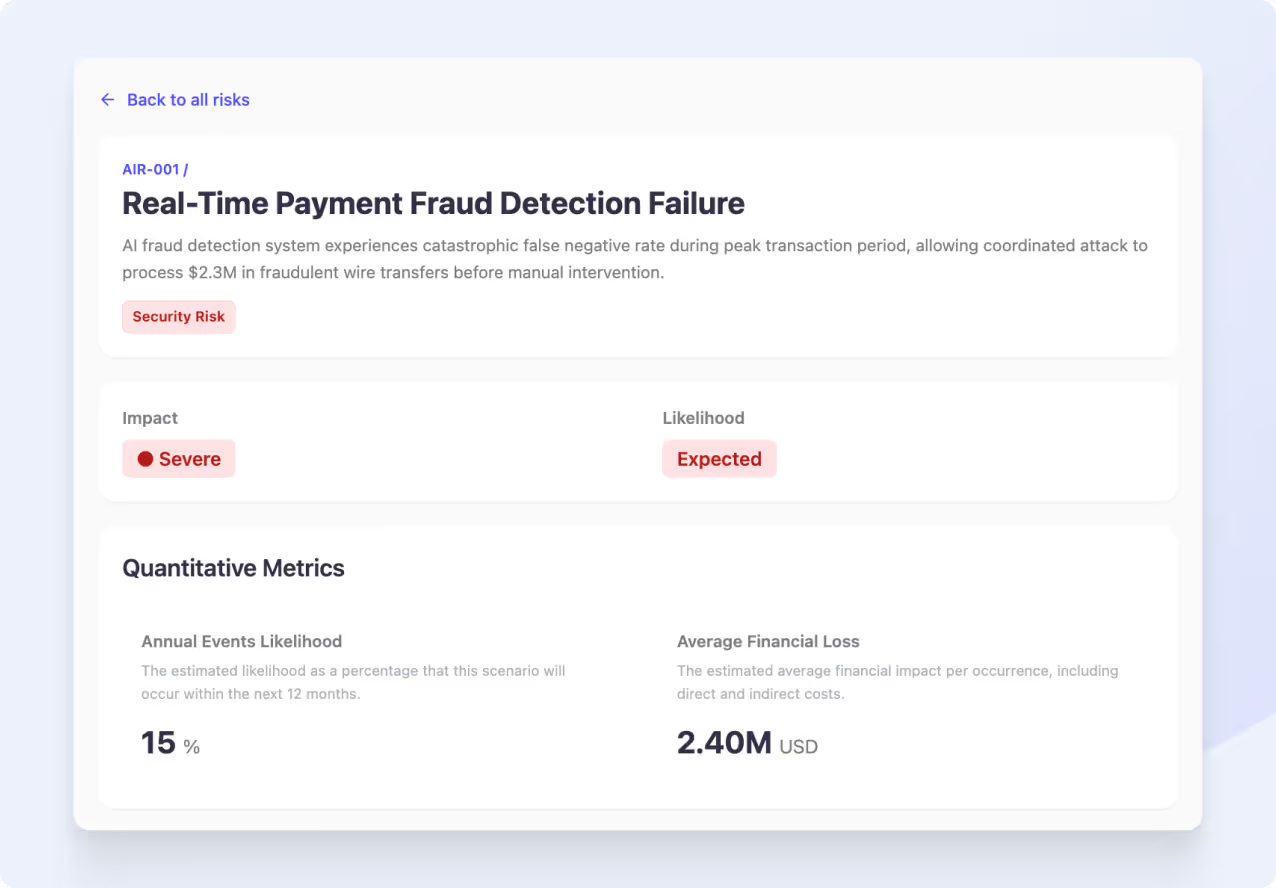

What Happens When You Add an AI Loss Scenario

Instead of writing notes in isolation, you build a complete view of the risk in one interface. You can define the type of AI risk, set qualitative impact and likelihood, and add quantitative metrics that reflect potential loss or frequency. The result is a risk entry that holds context, assumptions, and analysis together, without relying on spreadsheets.

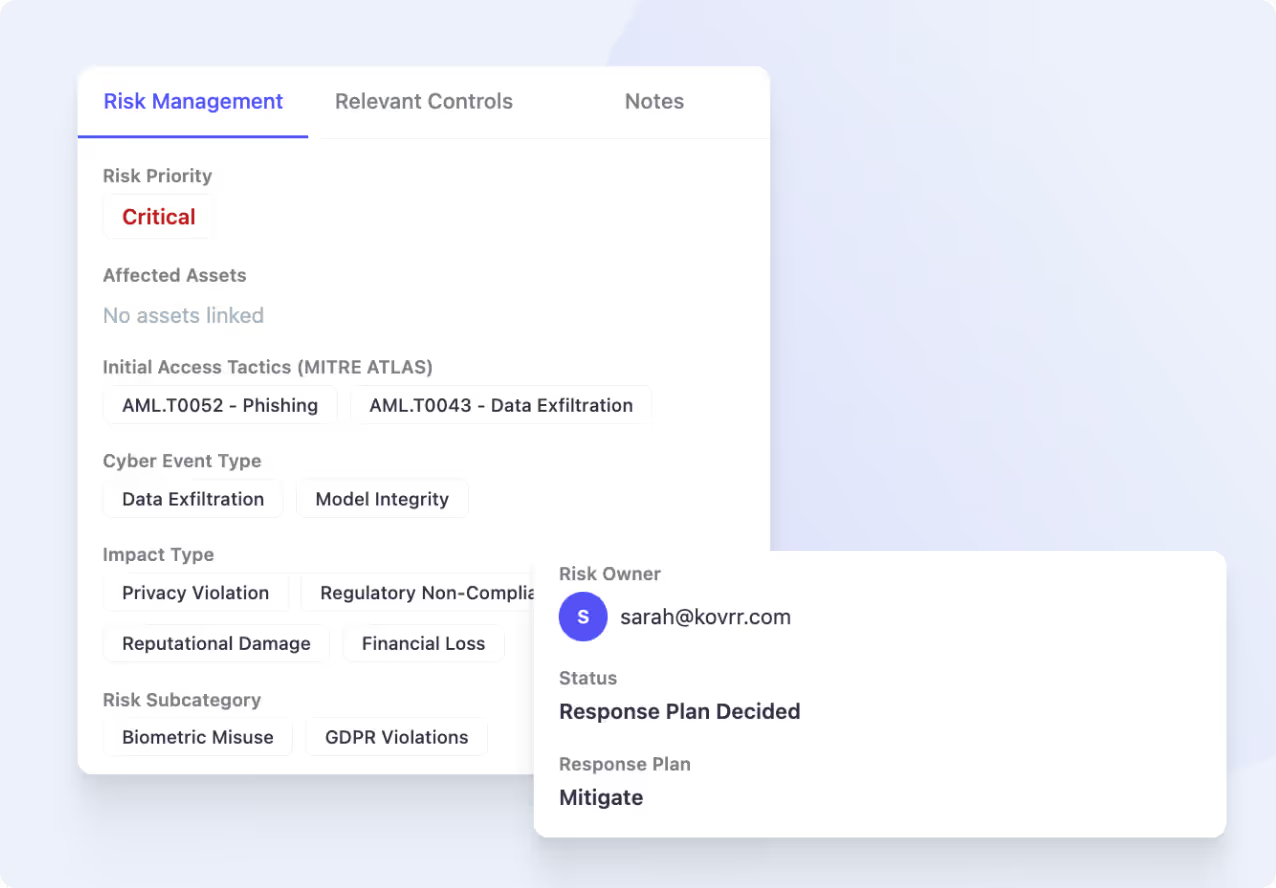

Progress From Identification to Management

Once a scenario exists, the register supports active risk management.You can link affected assets, document how the risk could be triggered, and categorize the event and impact types involved. Ownership and status are visible at a glance, so it’s clear who is responsible. Nothing gets lost between tools, and progress doesn’t rely on memory or manual tracking.

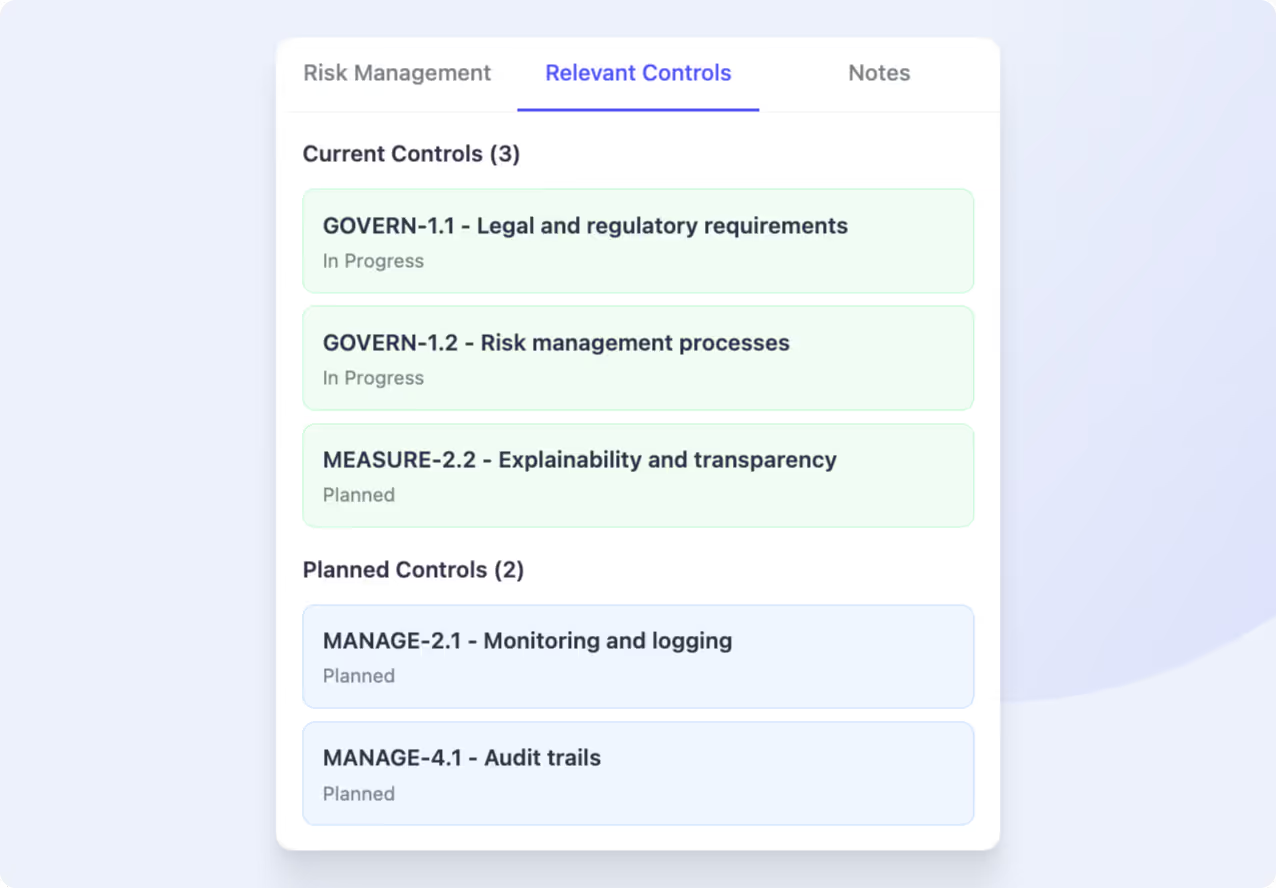

Connect AI Risks to Controls and Evidence

Each scenario can be tied to relevant controls, creating traceability between identified risks and the safeguards intended to address them. This alignment helps explain how risks are mitigated, not just that they exist. Notes and attachments keep supporting context close at hand. Whether it’s documentation or supporting analysis, everything stays connected to the scenario.

Why Start Adding Five AI Loss Scenarios?

You don’t need a full register to understand the value of structure. By the time five scenarios are added, patterns emerge. Priorities become clearer. Gaps in ownership or mitigation stand out. This experience is intentionally limited so you can focus on learning the workflow, seeing how risks are captured, and deciding how you want to manage AI exposure.

Turn Your AI Risks Into Something You Can Manage

Add real AI risks using a structured register designed for active oversight. Capture context in one place and see how governance takes shape as scenarios accumulate.