Blog Post

What Data Is Required for EU AI Act Compliance

April 9, 2026

TL;DR

- The EU AI Act requires organizations to maintain structured documentation demonstrating how AI systems are designed, governed, and monitored throughout their lifecycle.

- Compliance depends on maintaining records covering training data governance, technical system design, risk management processes, and operational oversight for high-risk AI systems.

- Article 10 requires organizations to document how training, validation, and testing datasets are sourced, evaluated for quality, and assessed for potential bias.

- Technical documentation under Article 11 and logging requirements under Article 12 allow regulators to review how AI systems function and trace how outputs are produced.

- Organizations that establish centralized documentation processes early will be better positioned to demonstrate EU AI Act compliance as enforcement deadlines approach.

Why Documentation Sits at the Core of EU AI Act Compliance

The EU AI Act places significant emphasis on documentation because regulatory oversight depends on an organization's ability to demonstrate how its AI systems operate and how associated risks are managed. Compliance is not determined solely by how an AI system performs, but by whether the organization can provide evidence that appropriate governance, risk controls, and oversight mechanisms are in place throughout the system lifecycle.

Several provisions of the regulation establish this documentation-first approach. Article 9, for instance, requires organizations responsible for high-risk AI systems to maintain a formal risk management process, one that must be documented in a way that shows how risks are identified, evaluated, and mitigated over time. Article 11 further requires providers of high-risk AI systems to maintain technical documentation describing system design, development methods, and performance testing. Article 12 introduces record-keeping obligations, ensuring that AI systems generate logs capable of supporting traceability and regulatory review.

Documentation, under this framework, functions as the primary mechanism through which compliance is demonstrated. Regulators expect organizations to maintain clear records showing how AI systems were built, what data informed their development, how risks were assessed, and how performance is monitored after deployment. Organizations that cannot produce that evidence when called upon will struggle to show that their AI systems operate within the governance standards the Act demands, regardless of how well those systems actually perform.

The Categories of Information Organizations Must Maintain

EU AI Act compliance requires organizations to maintain several categories of information that collectively demonstrate how AI systems are designed, governed, and monitored. These records allow regulators to evaluate whether appropriate safeguards are in place throughout the system lifecycle. Rather than relying on a single report or periodic assessment, the regulation expects documentation to be maintained continuously across multiple areas of AI development and operation.

Data documentation is one of the most significant categories. Article 10 establishes expectations for data governance by requiring organizations to demonstrate that the datasets used to train, validate, and test AI systems meet defined quality standards. Those stipulations include records of dataset origin, evidence of representativeness, and documentation of the processes used to identify and address potential bias. The underlying premise is that a system's outputs can only be trusted if the data on which it was built can be accounted for.

Technical system documentation forms another core category. Article 11 requires providers of high-risk AI systems to maintain detailed descriptions of system design, intended purpose, development methodology, and testing procedures. Rather than serving as an internal reference, this documentation functions as a regulatory artifact, or the evidence base regulators draw on when evaluating whether a system was developed and assessed according to appropriate standards.

Additional categories include logging and record keeping under Article 12, which enable traceability of system outputs and decisions, and risk management documentation under Article 9, which records how potential harms are identified and mitigated. Governance documentation, thereby covering oversight procedures and internal compliance policies, sits across all of these categories, providing the institutional layer that holds the broader framework together.

Training, Validation, and Testing Data Requirements

Data governance occupies a central role in EU AI Act compliance because the performance and reliability of AI systems depend heavily on the quality of the data used during development. Article 10 establishes requirements for how organizations must manage the datasets used to train, validate, and test high-risk AI systems. The provisions are grounded on the straightforward premise that an AI system can only be as trustworthy as the data it was built on.

Organizations must maintain documentation describing the datasets used during model development. That compilation should include records of how data was collected, where it originated, and the processes applied to prepare it for training and evaluation. Regulators expect organizations to demonstrate that datasets are relevant to the intended purpose of the AI system and that they reflect the conditions under which the system will operate once deployed. Dataset relevance, under the EU AI Act, is explicitly treated as a compliance requirement and not an engineering consideration.

Article 10 also places heavy significance on representativeness and data quality. Organizations must evaluate whether training and testing data appropriately reflect the populations, environments, and conditions the AI system is designed to address. Procedures for identifying errors and potential bias within datasets must be documented alongside the mitigation measures applied to address them. The expectation from the EU AI Act regulators is that data quality is actively managed rather than assumed.

As such, clear documentation of data governance practices serves as the evidentiary foundation that regulators rely on when assessing whether an AI system was developed responsibly. Organizations must be able to produce records that account for dataset origin, quality controls, and bias mitigation procedures in a form that holds up to scrutiny. Article 10 effectively treats documentation as proof of diligence, and in a regulatory context, proof matters as much as performance.

Technical Documentation for High-Risk AI Systems

Article 11 of the EU AI Act institutes documentation requirements that are considerably more demanding than what most organizations currently maintain for their software systems. The Act demands that providers of high-risk AI systems must produce and retain detailed records that describe how a system was designed and how it was evaluated before deployment. The logic is that regulators cannot assess whether a system is safe and compliant if they cannot first understand how it was built.

System architecture and design documentation thus form the foundation of these requirements. Organizations must maintain records describing the technical structure of the AI system, the development methodology applied, and the intended purpose the system was built to serve. Documentation must reflect the system as it actually exists at any given point in time and be updated as the system evolves. Treating these records as living documents is a core expectation of the regulation, not an administrative preference.

Testing and performance documentation represent another critical dimension of Article 11 compliance. Organizations are instructed to maintain records of the procedures used to evaluate system performance and the results obtained. Regulators will use this documentation to assess whether the system was subjected to rigorous evaluation before being deployed in a high-risk context. A system that performs well in production but lacks documented evidence of structured testing will struggle to meet this standard.

The cumulative weight of Article 11's requirements reflects the broader regulatory expectation that high-risk AI systems are treated as accountable infrastructure rather than opaque tools. Organizations that approach technical documentation as a compliance formality rather than a governance discipline will find it difficult to produce the depth of evidence the Act demands when regulators come to review it.

EU AI Act Logging and Record-Keeping Requirements

Logging and record-keeping requirements under the EU AI Act were written to ensure that AI systems remain traceable and accountable once they are deployed. Article 12 requires that high-risk AI systems be set up to generate logs that allow organizations to monitor system behavior and reconstruct how specific outputs or decisions were produced. These records play a critical role in supporting oversight and enabling meaningful regulatory review.

Specifically, logs help organizations track how an AI system performs in real operational environments. They capture the inputs a system receives and the outputs it produces, as well as the operational events that shape its behavior over time. Maintaining these records enables organizations to identify anomalies and investigate potential issues before they escalate. For high-risk systems that influence consequential decisions, this level of traceability is essential for demonstrating responsible system management.

Record-keeping likewise supports regulatory transparency in a direct and practical sense. For example, when authorities request evidence during an audit or investigation, organizations must be able to produce documentation showing how the system has functioned over time. An organization that cannot reconstruct the basis for a system's outputs will find it difficult to demonstrate that appropriate safeguards were indeed in place at the time those outputs were generated.

Detailed operational records essentially serve as the evidence base that separates responsible AI deployment from unverifiable claims of compliance. Organizations that invest in structured logging practices position themselves to respond to regulatory scrutiny with certainty and accuracy rather than scrambling to reconstruct a governance trail after the fact. As enforcement activity under the Act increases, the quality and comprehensiveness of an organization's logging infrastructure will become an increasingly visible indicator of governance maturity.

Governance and Risk Documentation

In parallel to the technical documentation, the EU AI Act requires organizations to maintain records that demonstrate how AI risks are governed and managed throughout the lifecycle of a system. In theory, these records will help regulators determine whether appropriate oversight structures exist and whether organizations actively monitor the potential impacts of the AI systems they deploy. Governance documentation in this case is the institutional layer that holds the broader compliance framework together.

Article 9 lays down the requirement for a documented risk management framework for high-risk AI systems. Organizations must maintain records that show how risks associated with an AI system are identified, assessed, and mitigated over time. This process is expected to continue throughout the operational life of the system rather than concluding once the system is deployed. Documentation should reflect how risk assessments are updated as systems evolve and as new operational contexts emerge.

Human oversight forms an equally vital component of governance documentation. Article 14 requires organizations to ensure that high-risk AI systems operate under appropriate human supervision. Documentation must describe how human operators monitor system performance and under what conditions they are expected to review or override system outputs. Procedures for intervening when a system behaves in an unexpected or potentially harmful way must also be captured in writing.

Governance records serve as verifiable evidence that AI systems operate within a structured oversight environment, as opposed to functioning as autonomous and unaccountable tools. Maintaining clear documentation of risk management processes and oversight procedures allows organizations to demonstrate to regulators that appropriate safeguards remain in place as systems evolve. Regulators reviewing AI governance programs will look past high-level policy statements and assess the quality of the records behind them.

Why Maintaining This Documentation Is Operationally Challenging

Maintaining the documentation required for EU AI Act compliance is difficult for many organizations because AI systems are rarely developed and governed from a single centralized function. In large enterprises, data science teams and product groups frequently adopt AI tools independently from operational departments, each maintaining their own records in different systems and repositories. The information needed to demonstrate compliance ends up distributed across the organization in ways that make it hard to consolidate or present coherently to regulators.

Third-party AI systems add another layer of complexity. Many organizations rely on software platforms that incorporate embedded AI capabilities, yet have limited visibility into how those systems were developed or what data was used to train them. When the documentation regulators expect is held by external providers rather than the organizations deploying their systems, enterprises must determine how to obtain and manage that information in a form that satisfies their own regulatory obligations. Vendor relationships that were straightforward from a procurement standpoint become considerably more complicated when viewed through a compliance lens.

The evolving nature of AI systems themselves compounds the challenge. Models are regularly updated and expanded to meet shifting business needs, and each iteration may require corresponding updates across the full documentation framework. Organizations that lack coordinated processes for tracking these changes will find that their documentation drifts out of alignment with the systems it is meant to describe. That gap is precisely what regulators are equipped to identify.

How Organizations Can Start Building EU AI Act Documentation Processes

Organizations preparing for EU AI Act compliance should begin by establishing a solid understanding of where artificial intelligence systems operate across the enterprise. Most companies accumulate AI capabilities gradually as internal development efforts expand alongside vendor platforms and individually adopted software tools. A complete inventory of these systems provides the foundation for documenting how each one functions, what data it relies on, and the role it plays in business operations. That inventory is the prerequisite for everything that follows.

With visibility in place, organizations can then define internal documentation standards aligned with the regulation's requirements. Those standards should specify what information must be maintained for each AI system, covering training data governance, technical system descriptions, risk assessments, and oversight processes as distinct documentation obligations rather than a single consolidated report. Consistent documentation practices ensure that records are maintained in a format that supports both regulatory review and internal governance as the AI landscape within the organization evolves.

Clear ownership is equally essential to ensuring that documentation processes are sustainable. Security and compliance functions contribute different parts of the information required for a complete compliance record, as do data science and product teams. Assigning explicit responsibility for each documentation area ensures that records remain current as systems are updated and new AI capabilities are introduced. Ownership without accountability structures tends to erode over time, particularly in organizations where AI adoption is outpacing governance.

Centralized documentation management ties these efforts together. Maintaining records in a single structured location allows organizations to track updates and preserve version control, ensuring they can respond efficiently when regulators request evidence of compliance. Although it seems far, the deadline of December 2, 2027, leaves limited time for organizations still in the early stages of building these processes. The documentation framework an enterprise starts building today will determine how credibly it can account for its AI systems when regulators come asking.

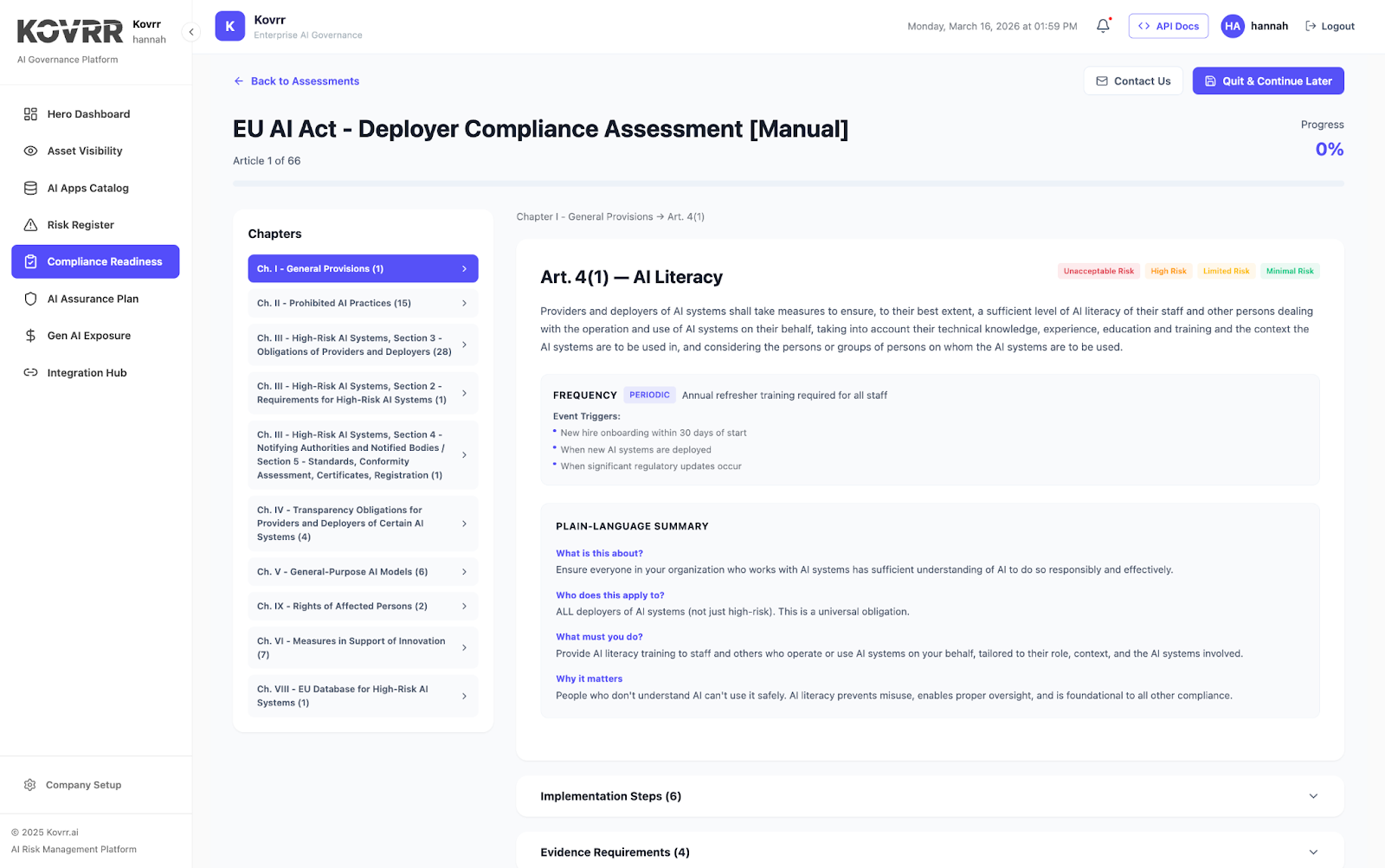

The EU AI Act introduces extensive documentation requirements that many organizations are only beginning to assess. Kovrr’s AI Compliance Readiness module includes automated EU AI Act capabilities that collect evidence, map it to EU AI Act Articles, and help teams prepare. Schedule a demo to get started.

.webp)