Blog Post

EU AI Act Compliance Explained for CISOs and GRC Leaders

March 24, 2026

TL;DR

- The EU AI Act establishes the first comprehensive legal framework governing artificial intelligence, introducing enforceable oversight requirements for organizations that develop or deploy AI systems.

- The regulation applies to both EU and non-EU organizations whose AI systems are used within the Union, significantly expanding the scope of global AI governance obligations.

- AI systems are regulated according to a risk-based classification model, with high-risk applications facing the most stringent governance, documentation, and oversight requirements.

- Compliance requires organizations to inventory AI systems, classify risk levels, maintain technical documentation, implement governance processes, and monitor AI performance throughout the system lifecycle.

- Security and GRC leaders must establish visibility into enterprise AI usage and implement structured governance frameworks to ensure ongoing compliance with the EU AI Act.

The EU AI Act and Why It Matters for Security and Governance

The European Union's Artificial Intelligence Act (EU AI Act) represents the first comprehensive attempt by a major regulator to establish legal oversight of artificial intelligence. Its objective is to ensure that AI systems deployed across the EU operate safely, transparently, and in a manner that protects fundamental rights. While AI governance has been widely discussed for years, the EU AI Act elevates the conversation into a binding regulatory obligation for organizations that develop or deploy AI systems within the European market.

Most EU AI Act obligations become enforceable beginning August 2, 2026, and for enterprises, the implications of it extend well beyond legal teams. AI is now largely embedded across core business operations. Everything from customer service and fraud detection to recruitment and decision automation has been upgraded with AI in recent years to maximize efficiency. As these systems increasingly influence financial outcomes and access to services, regulators are coming to view them as sources of operational and societal risk. The EU AI Act addresses these concerns by establishing enforceable requirements tied to the potential impact of each system.

Security and governance leaders now carry new responsibilities as a result. CISOs and GRC teams must help ensure that AI systems are identified, assessed, and governed through structured oversight processes. That newly bestowed responsibility includes the need to understand how AI operates across the organization. These stakeholders must also maintain documentation that demonstrates compliance and monitor risks throughout the system lifecycle. While some organizations have already begun building AI governance frameworks, many others will now need to start developing robust oversight processes that meet these new requirements.

Which Organizations Must Comply With the EU AI Act

The EU AI Act's purview is not limited to companies headquartered within the Union. Under Article 2, the regulation specifies that it applies to any organization that develops, deploys, or makes AI systems available within the EU market. In other words, companies based outside the EU are not exempt. If their AI systems are used within the Union or affect individuals located there, the regulation applies. The Act's applicability is ultimately determined by where AI is used, not where the organization behind it is based.

Article 3 then draws a meaningful distinction between the major actors in the AI ecosystem. Providers are organizations that develop AI systems or place them on the EU market under their own name. Deployers, on the other hand, are entities that use AI systems within their operations. Both groups carry specific obligations that vary depending on how a given system is categorized under the Act. The regulation further extends to importers and distributors that bring AI systems into the EU marketplace, as well as third parties that modify or maintain those systems.

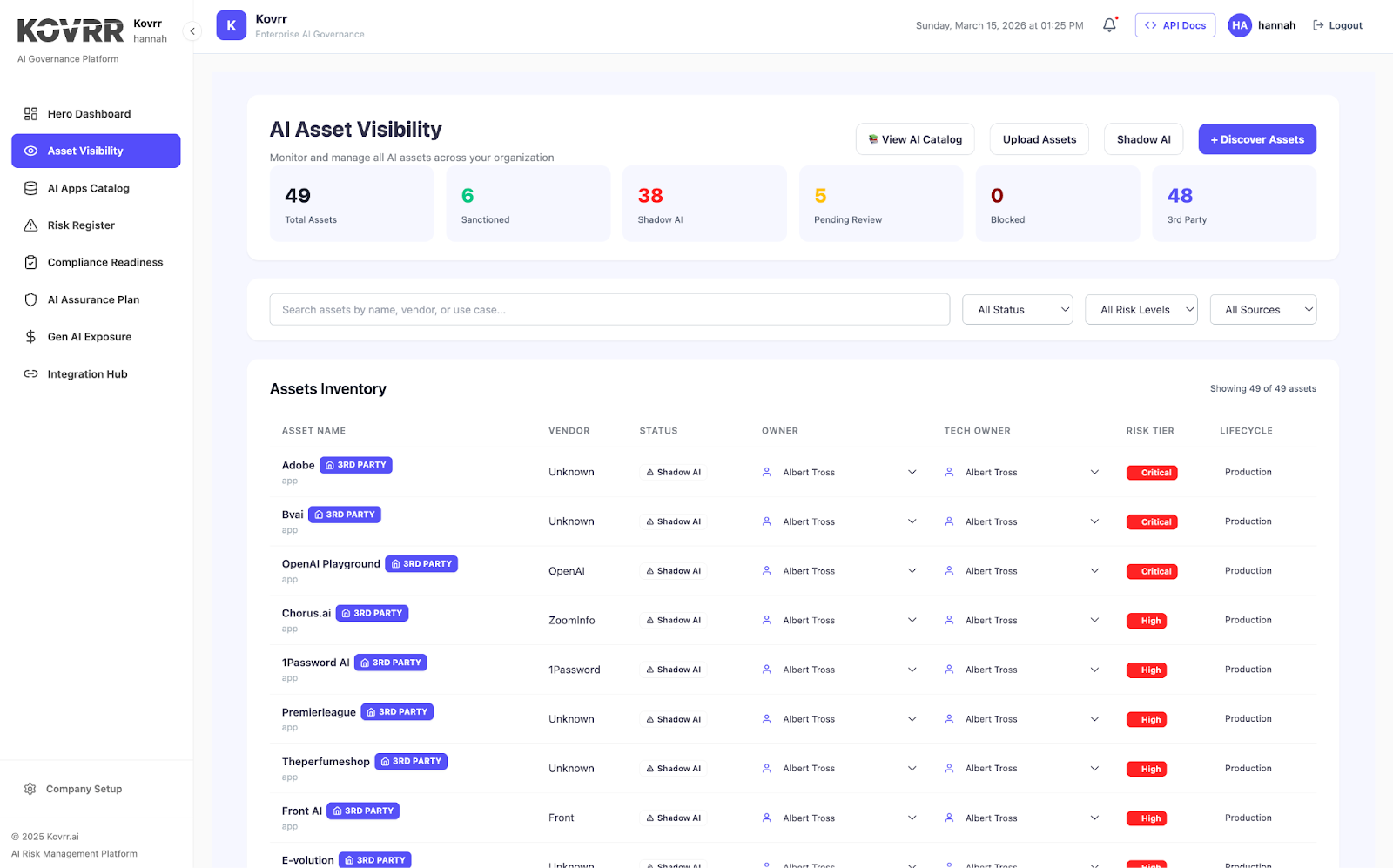

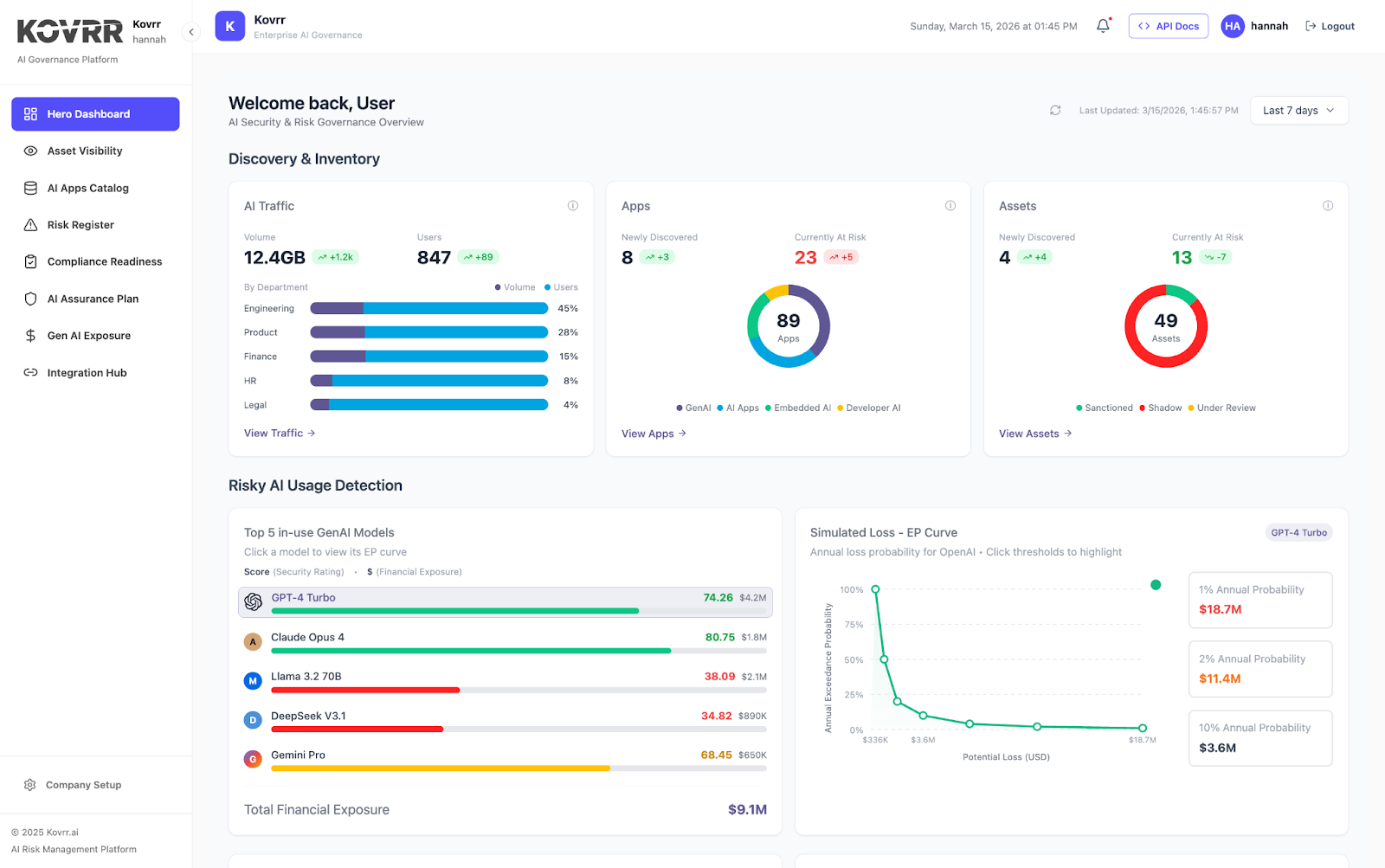

For CISOs and GRC leaders, the first practical challenge they face is one of AI asset visibility. A large number of enterprises now rely on AI capabilities that are already embedded within third-party tools, internal applications, and vendor platforms. Determining which of those systems fall under the Act requires organizations to map AI use across the enterprise and establish which role they occupy in relation to each system. Ongoing reassessment is essential as new tools are adopted and existing systems are modified.

The EU AI Act’s Risk-Based Approach to Regulating AI Systems

At the center of the EU AI Act is a risk-based regulatory model. Instead of taking a one-size-fits-all approach and imposing uniform obligations across all AI systems, the Act categorizes applications according to the level of risk they pose to individuals, safety, and fundamental rights. Compliance requirements scale with potential impact, allowing regulators to concentrate oversight where the consequences are most significant.

Article 5 defines AI practices considered to pose unacceptable risk, including systems that manipulate human behavior in ways that could cause harm, exploit vulnerable populations, or enable social scoring by governments. The category also covers real-time biometric surveillance in public spaces, with narrow exceptions for law enforcement under strict judicial oversight. Such practices are prohibited outright within the European Union, and organizations found operating them face the Act's most severe penalties.

High-risk AI systems receive the most comprehensive regulatory scrutiny. Article 6 and Article 7 establish the criteria for determining when a system meets that threshold, while Annex III segments high-risk applications by domain, such as employment decisions, credit evaluation, law enforcement, critical infrastructure, and access to essential services. Limited-risk systems face transparency obligations, while minimal-risk systems remain largely unrestricted.

The nuanced AI risk framework defines the starting point for any compliance effort. Organizations must first inventory the AI systems they operate, then classify each one according to the Act's risk structure. For many enterprises, that process alone will surface gaps in visibility, revealing AI capabilities embedded in third-party tools or legacy platforms that have never been formally assessed. From there, the applicable governance and documentation requirements follow.

What Qualifies as a High-Risk AI System Under the EU AI Act

While the EU AI Act establishes several risk categories, the bulk of its compliance obligations apply to systems classified as high risk. Article 6 sets out the criteria for that designation, drawing on a framework designed to concentrate regulatory scrutiny where the potential for harm is greatest. Understanding where that threshold sits is essential for any organization operating AI within the EU market.

High-risk systems generally fall into two groups, the first of which is those that influence consequential decisions about individuals, and the second comprises those that support critical societal functions. Annex III covers a broad range of applications, including automated hiring and performance evaluation tools, critical infrastructure management, educational admissions, law enforcement, and eligibility determinations for public benefits. The common thread is not the sophistication of the technology but the significance of its potential impact on people's lives.

Notably, the classification as high risk does not inherently amount to a prohibition. Rather, it signals that the system must operate within a structured governance framework. Organizations responsible for high-risk systems are required to implement risk management processes, maintain detailed technical documentation, ensure meaningful human oversight, and monitor performance across the full system lifecycle. Failure to meet these obligations exposes organizations to enforcement action and, in some cases, to the Act's most significant financial penalties.

Mapping which systems meet the high-risk threshold is fundamental to any compliance program. Enterprises routinely deploy AI across multiple departments, often without centralized visibility into where those systems sit or how they are used. In many cases, high-risk capabilities are embedded within broader platforms or vendor tools, making them easy to overlook during an initial assessment. Without a comprehensive inventory, implementing the safeguards the Act requires becomes difficult to execute consistently and harder still to demonstrate to regulators.

What EU AI Act Compliance Requires in Practice

High-risk AI systems carry a defined set of operational obligations under the EU AI Act, spanning the full lifecycle of the system. The regulation's requirements are modeled to ensure that AI systems are developed and deployed in a controlled and accountable manner. Articles 9 through 12 establish the core governance and documentation expectations that organizations must meet to demonstrate compliance. If enterprises are still in the early stages of AI governance, the specificity of these requirements may represent a significant step up.

Article 9, for example, requires entities to establish and maintain a formal risk management system. That system must identify potential risks associated with the AI, evaluate their potential impact, and implement mitigation measures that remain in place throughout the system's operational life. Similarly, risk management under the EU AI Act is not a one-time assessment but a continuous process tied to how the system performs in practice. As systems are updated or deployed in new contexts, the risk management process must be revisited accordingly.

Data governance is also a main focus of the compliance framework. Article 10 requires that training, validation, and testing datasets be relevant, representative, and free from errors that could introduce bias or distort system performance. The quality of the data on which an AI system is built directly shapes the reliability and fairness of its outputs, making data management a substantive compliance obligation rather than a background concern. Organizations that have not previously applied formal data governance standards to their AI pipelines will need to close that gap.

Technical documentation requirements add another layer of accountability. Article 11, for instance, requires providers to maintain records describing system design, development methods, and testing procedures. Article 12 then extends those obligations to logging capabilities, ensuring organizations can trace how AI systems generate outputs and reach decisions. Maintaining that level of documentation requires calculated investment in tooling and process, particularly for organizations managing multiple high-risk systems across different business units.

These provisions taken together establish a governance infrastructure that treats AI systems as dynamic, monitored assets. Building that infrastructure requires putting in place processes for ongoing documentation, risk evaluation, and performance monitoring across every high-risk system the organization operates. Enterprises that approach these requirements as a one-time compliance exercise are likely to find themselves underprepared when regulators begin scrutinizing how AI governance holds up over time.

Governance Responsibilities for CISOs and GRC Leaders

The EU AI Act's emphasis on accountability and oversight strongly conveys that AI governance cannot remain confined to technical teams alone. The regulation requires organizations to establish clear governance structures that define how AI systems are evaluated, documented, and monitored throughout their lifecycle. Meeting that standard demands active involvement from security and risk leadership, not just the engineers and data scientists who build and maintain the systems, or the lawyers who are more accustomed to dealing with international laws.

CISOs occupy a natural position within that governance structure. AI applications routinely interact with sensitive data and core infrastructure, making them a legitimate concern for security leadership. Monitoring AI systems for vulnerabilities and misuse, maintaining logging capabilities, evaluating system integrity, and integrating AI oversight into existing security processes all fall within the scope of what the Act effectively demands. Organizations that treat AI risk as separate from broader cybersecurity risk will find that approach difficult to sustain under regulatory scrutiny.

GRC leaders bring a different but equally critical layer of oversight. Governance and compliance teams are typically responsible for managing regulatory frameworks, developing internal policy, and maintaining audit readiness across the enterprise. Under the EU AI Act, those responsibilities extend to maintaining documentation for high-risk systems, overseeing AI deployment policies, and coordinating compliance reporting when regulators request evidence of structured oversight. The breadth of the Act's documentation requirements makes that function more demanding than most traditional compliance programs.

Effective AI governance depends on sustained collaboration across multiple functions. Security teams, compliance leaders, data scientists, product owners, and legal advisors alike each contribute to ensuring that AI systems are deployed responsibly and monitored consistently. Without clear ownership and defined governance processes, accountability tends to fragment across teams, creating the kind of visibility gaps that regulators are likely to probe. Organizations that establish those structures early will be better positioned to demonstrate compliance as enforcement activity increases.

Why Building Visibility Into AI Usage Is a Major Compliance Challenge

Gaining a complete, detailed picture of where artificial intelligence operates across the organization is among the most demanding aspects of EU AI Act compliance. In many enterprises, AI adoption has already expanded quickly across departments, business units, and teams, often without centralized governance or consistent documentation. Security and governance leaders thus frequently find themselves managing regulatory obligations for systems they have never fully catalogued.

Moreover, AI capabilities now appear across a wide range of enterprise tools. Marketing teams use AI for customer engagement and analytics. Human resources departments rely on automated screening and evaluation tools. Finance teams integrate AI into fraud detection and credit analysis workflows. Third-party platforms compound the challenge further, often introducing embedded AI features that organizations adopt without fully assessing their regulatory implications.

Fragmented AI adoption creates significant obstacles when organizations attempt to comply with the Act's risk classification requirements. Classifying AI systems under the regulation's framework requires enterprises to first identify every system in use, understand how each one operates, and evaluate the potential risks it presents. Without that comprehensive inventory, the classification process becomes difficult to perform consistently and nearly impossible to defend under regulatory scrutiny.

Establishing enterprise-wide visibility into AI usage is, therefore, a fundamental step toward compliance, one that, if not taken, could sacrifice the integrity of any other ensuing governance work. A complete inventory allows organizations to classify risk levels accurately, maintain the documentation the Act requires, and ensure that processes extend across the full AI lifecycle rather than applying only to the systems that happen to be well known internally.

How Organizations Can Begin Preparing for EU AI Act Compliance

Compliance with the EU AI Act begins with a structured understanding of how artificial intelligence is used across the organization. Most enterprises accumulate AI capabilities gradually through internal development, embedded software features, and third-party tools adopted at the department level. Before any governance framework can be applied, organizations need to know where these systems exist and how they interact with business operations.

With a centralized inventory in place, providing the foundation for documenting each system's purpose, associated data sources, and operational scope, organizations can evaluate each system against the Act's risk classification framework. Determining which systems qualify as high risk defines the scope of the compliance burden and identifies where the most demanding regulatory obligations will apply. Systems that influence hiring decisions or financial access, for example, carry the most significant requirements and warrant early attention.

Governance processes must be built to match those obligations. Risk management procedures and logging capabilities ensure that AI systems operate within controlled and auditable parameters. Clear ownership across security, compliance, legal, and technical functions allows those processes to hold up as systems evolve. The organizations that establish that foundation early will be better positioned when August 2, 2026, arrives. Those who wait will find the window for building a credible compliance program increasingly narrow.

The EU AI Act implementation timeline is approaching quickly, and many organizations are only beginning to assess their readiness. Kovrr’s AI Compliance Assurance module automatically collects evidence, maps it to EU AI Act Articles, and helps organizations prepare. Get started now.

.webp)