Blog Post

EU AI Act Compliance Starts With Operationalizing AI Governance

May 13, 2026

TL;DR

- The EU AI Act requires continuous governance, yet most organizations remain stuck between understanding regulatory expectations and operationalizing them across fragmented systems and teams.

- Compliance depends on maintaining auditable evidence of governance practices that expose gaps in ownership, coordination, and system-level visibility.

- Fragmented workflows and manual processes make it difficult to sustain compliance over time, especially as AI systems evolve and outpace governance approaches.

- Embedding risk management into daily AI operations creates a foundation for compliance, enabling organizations to track exposure and maintain alignment as conditions change.

- EU AI Act automation tools enable sustained compliance by continuously collecting, mapping, and maintaining evidence, reducing operational strain while supporting audit readiness.

Awareness Is Not a Compliance Strategy

The European Union's (EU) AI Act is the most consequential regulatory development in enterprise technology in years. For organizations deploying artificial intelligence at scale, which essentially includes all businesses nowadays, it introduces a formal, continuous obligation to demonstrate governance. The regulation has been in the public domain long enough that most organizations have a working understanding of what it requires. What has proven harder to close, however, is the gap between understanding the regulation and being ready for it.

That distance between the two is largely operational. The requirements themselves are easy enough to understand conceptually. The difficulty is in translating them into the actual fabric of how an organization manages AI, across teams that have different tools and different definitions of what "documented" means. Indeed, most enterprises have AI systems spread across business units with no central inventory and ownership structures that make it genuinely difficult to answer the basic question of who is responsible for which governance activities.

Compliance programs that approach the EU AI Act as a documentation exercise will struggle in their endeavor to become compliant. The regulation is designed to test whether governance is real. Regulators want confirmation that it is embedded in how AI systems are built, deployed, and monitored over time, or whether it exists only in policy documents that nobody reads. The organizations that will meet that test are those that stop treating compliance as a legal function and start treating it as an ongoing operational one.

What the EU AI Act Demands of Organizations

The EU AI Act is built around a risk-tiered framework that assigns compliance obligations based on how an AI system is used and what harm it could cause. High-risk systems, such as those deployed in employment decisions, credit assessments, healthcare settings, law enforcement, and similarly sensitive domains, face the most stringent requirements, and the list of qualifying use cases is broader than many organizations initially assume.

For those systems, the regulation expects ongoing risk management, structured technical documentation, human oversight mechanisms, and logging that supports post-deployment monitoring. Accuracy and robustness standards apply as well. Organizations should not think of these as one-time deliverables that get filed and forgotten. They are, instead, very much continuous obligations that need to hold up under regulatory scrutiny at any point in time, meaning that the evidence supporting them has to stay current.

What makes this particularly demanding is the expectation of traceability. Regulators want and expect evidence, documented proof that governance processes exist and are producing measurable outcomes. Assembling that evidence today would require stakeholders to pull from a dozen different systems, chasing down ownership across multiple teams, and reconciling records that were never designed to integrate with one another.

Why Operationalizing Compliance Is Hard

The friction organizations encounter when trying to meet EU AI Act requirements comes down to execution. Governance artifacts tend to live in different places depending on who created them and when, with no consistent structure for how they are organized. Ownership is similarly fragmented across teams, with no single function holding a complete picture of whether what exists actually adds up to compliance. That fragmentation is manageable when someone is actively coordinating across it. However, most of the time, nobody is.

Compounding the problem is the pace at which AI systems change. A model that was assessed six months ago is very likely to have been retrained or repurposed since then. Static assessments degrade quickly, and manual processes for refreshing them are slow and inconsistent. The result is a compliance posture that looks reasonable in theory but cannot survive a serious audit.

Scaling further accelerates the problem. Informal governance can hold together when an organization has a handful of AI systems in play. As deployments expand across business units, the coordination overhead compounds. Still, at some point, the manual effort required to maintain consistent oversight across all of it will eventually exceed what teams can absorb, and coverage starts to erode in ways that are not always visible until something goes wrong.

Risk Management as the Core of EU AI Act Readiness

A credible compliance program under the EU AI Act starts with a risk management program that operates not as a checkbox, but as a living process embedded into how AI systems are deployed and monitored over time. Organizations that treat risk management as a discrete activity, something to complete before launch and revisit annually, will find that their compliance posture degrades almost as soon as it is established.

Effective AI risk management requires identifying potential harms and loss scenarios before deployment, assessing their likelihood and severity, and implementing controls that reduce exposure as conditions change. The less visible part of that work is the documentation and being able to show a regulator, at any given moment, how risks were identified and what was done about them. Nevertheless, that evidentiary thread is what regulators are looking for, and it is what most organizations struggle to maintain consistently.

The risks the EU AI Act is most concerned with are concrete and consequential. Bias embedded in automated decision-making can produce discriminatory outcomes at scale. Security vulnerabilities in model infrastructure create exposure that extends well beyond the AI system itself. Data misuse across training pipelines can violate fundamental rights in ways that are difficult to detect and harder to remediate after the fact. Regulators expect organizations to be able to demonstrate active management of these risks, not merely showcase their awareness.

Continuous monitoring is what grants a compliance program its durability. AI systems are not static, and neither are the risks they carry. Models get retrained on new data. Use cases expand beyond their original scope. Integrations with other systems introduce dependencies that were not part of the original risk assessment. Governance processes that cannot track those changes in real time will accumulate blind spots, and those blind spots tend to surface at the worst possible moment.

The Role of Automation in Sustaining Compliance

Manual compliance workflows have a ceiling, and most organizations are closer to it than they realize. As AI deployments expand, the volume of systems requiring documentation and the granularity of evidence regulators expect all grow simultaneously. Teams that are already stretched find themselves spending more time assembling compliance records than actually managing risk. Eventually, the operational burden becomes unsustainable, and no amount of additional headcount resolves it.

EU AI Act automation instantly changes the economics of governance. When evidence collection and documentation mapping run as structured, repeatable workflows rather than ad hoc efforts stitched together before an audit, compliance becomes something an organization can maintain continuously rather than reconstruct periodically. The difference is significant. A compliance posture that is maintained continuously bears little resemblance to one that gets reconstructed periodically under pressure.

The more meaningful development is what automation makes possible at the integration layer. In contrast to demanding that compliance teams manually inventory what documentation exists and trace it against regulatory requirements, modern platforms can connect directly into the tools organizations already use and do that work programmatically. Evidence gets pulled, mapped to specific EU AI Act articles, and assessed for coverage without requiring anyone to coordinate across a dozen different systems by hand.

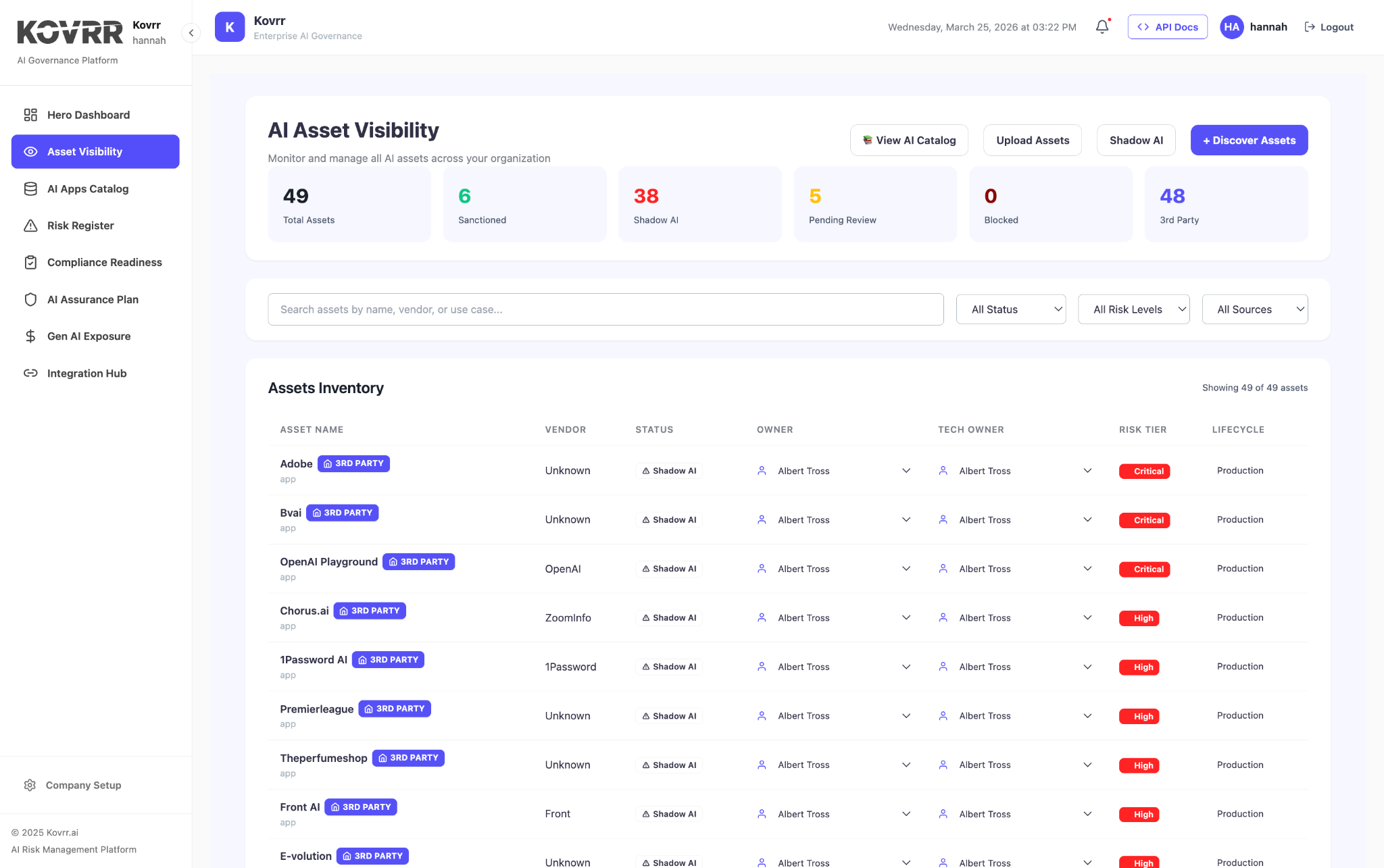

Where documentation is missing entirely, some platforms can generate policy templates and structured evidence artifacts that meet regulatory standards, giving teams a foundation to build from rather than a blank page to fill. Kovrr's AI Compliance Readiness module is built around this capability, connecting into existing infrastructure and automating the evidence pipeline so that audit readiness is a continuous state rather than a periodic sprint.

Building a Governance Foundation That Can Scale

Organizations that approach EU AI Act compliance as a continuous program rather than a project tend to fare better, and the difference shows up well before an audit. A program has ownership, structure, and continuity, while a project has a deadline. The regulation does not stop demanding after the first assessment is submitted, and governance models built around discrete deliverables tend to degrade precisely when sustained oversight matters most.

The foundation starts with visibility. Before governance can be applied consistently, organizations need a reliable picture of what AI assets exist across the business, where they are deployed, and who holds accountability for them. Without that inventory, governance efforts get applied unevenly, concentrated around the systems that are already well-documented, while others operate outside any formal oversight structure. The systems that fall outside that perimeter are often the ones that carry the most unexamined risk.

Structured risk assessments build on that foundation by establishing a baseline that can be updated as conditions change. Centralized documentation gives compliance teams a single authoritative record to work from, rather than a fragmented collection of files spread across departments with no clear version control. Standardized validation processes create the repeatability that scaling requires, and that repeatability only holds when the data governance and security controls underneath it are built to the same standard.

Those three layers together are what a defensible AI governance program runs on. For a practical breakdown of what building each one requires, read [Operationalizing AI Governance: A 3-Pillar Framework for Practitioners].

The ultimate goal is a governance model that runs continuously, not one that gets activated when an audit is approaching. Regulations will continue to develop, enforcement will mature, and the AI systems organizations are deploying today will look meaningfully different in two years. Governance built for that reality treats compliance as infrastructure rather than a response, something that absorbs change without requiring a rebuild every time circumstances shift.

The Cost of Getting It Wrong

The penalties under the EU AI Act are designed to be consequential. Fines for the most serious violations can reach seven percent of global annual turnover, and for organizations with significant AI footprints, that figure represents a material liability rather than a remote possibility. Enforcement is still maturing, but the regulatory appetite for meaningful action is apparent, and organizations that have not established demonstrable governance will have little to stand behind when scrutiny arrives.

Regulatory penalties are only part of the exposure. Reputational damage in the wake of a compliance failure tends to outlast the fine itself. Institutional investors have become increasingly attentive to how organizations govern AI, and a public enforcement action carries a signal about organizational maturity that is difficult to walk back. The erosion of trust among customers and partners compounds that signal in ways that do not appear on a balance sheet but are no less real for it.

Operational disruption is a less-discussed but equally real risk. If a system has to be suspended or substantially modified to meet compliance requirements mid-deployment, the downstream effects on the business can be significant. Building governance from the beginning is materially less disruptive than retrofitting it after the fact.

The Trajectory of AI Governance Regulation

The EU AI Act may be the first regulation of its kind, but the momentum it represents is not going to be reversed. Other jurisdictions, such as Japan, Singapore, and Canada, are developing their own frameworks, too, and the expectations placed on organizations that deploy AI will continue to rise. Organizations that have not built governance infrastructures capable of absorbing that pressure will find themselves repeatedly caught off guard as requirements tighten.

The companies that will manage this well are not necessarily the ones with the largest compliance teams or the most elaborate documentation. They are the ones that have treated governance as an operational capability rather than a regulatory response, building the processes and institutional muscle to absorb new requirements without starting from scratch each time circumstances change.

There is also a broader case to be made that goes beyond regulatory obligation. Organizations that govern AI well are better positioned to deploy it responsibly and build the kind of institutional trust that is increasingly difficult to earn back once it is lost. The EU AI Act creates a meaningful forcing function. For enterprises willing to treat it as one, the work of becoming compliant and the work of becoming genuinely capable at AI governance are the same.

For organizations navigating EU AI Act requirements, Kovrr helps automate compliance workflows, from evidence collection to audit-ready reporting, making governance easier to sustain over time. Book a demo to learn more.

.webp)